Categories

Tags

Archives

Deterministic Guardrails & Self-Healing Workflows in AI

-

Posted by Eric Weston Filed in Arts & Culture #Deterministic Guardrails Agentic AI #autonomous agentic AI systems 6 views

In the fourth installment of our Technical Reasoning Loops series, we pivot from swarm architecture to the "hard physics" of AI reliability. We explore why linear LLM chains fail in production and how to replace them with deterministic guardrails and self-healing loops. Key concepts include Agent-C for temporal constraints, VIGIL for reflective runtimes, and the use of LangGraph to build state-gated pipelines that ensure 99.9% execution accuracy.

A multi-agent system without deterministic guardrails isn't an "autonomous employee", it's a liability waiting for a hallucination. In production, "vibes" don't scale. Reliability is engineered, not prompted.

If you’ve experimented with basic LangChain sequences or simple GPT-4 prompts, you’ve likely hit the "Reliability Ceiling." Everything works perfectly in the playground, but the moment you connect it to your live database or customer-facing API, the system drifts. It hallucinates a parameter, misses a logic gate, or, worst of all, executes a destructive command because it "thought" it was the right next step.

At Agix Technologies, we don’t build chatbots; we engineer autonomous agentic AI systems that function like high-level architects. The secret to making these systems production-ready isn't a better prompt; it’s a deterministic cage that keeps the probabilistic nature of LLMs in check.

The Failure of Linear Chains: Why A -> B -> C is Broken

Most AI implementations rely on linear chains. The logic follows a straight path:

- Fetch data.

- Process data.

- Output result.

In a deterministic world (traditional software), this is fine. In an agentic world, it’s a recipe for disaster. If the LLM output at Step B drifts even 5% from the expected schema, Step C fails. If Step C is an API call to your CRM, you’ve just corrupted your data.

Linear chains lack back-pressure and state-awareness. They cannot "realize" they’ve made a mistake mid-flight. To build systems that actually reduce manual work by 80%, you need to wrap non-deterministic reasoning in formal, code-level constraints.

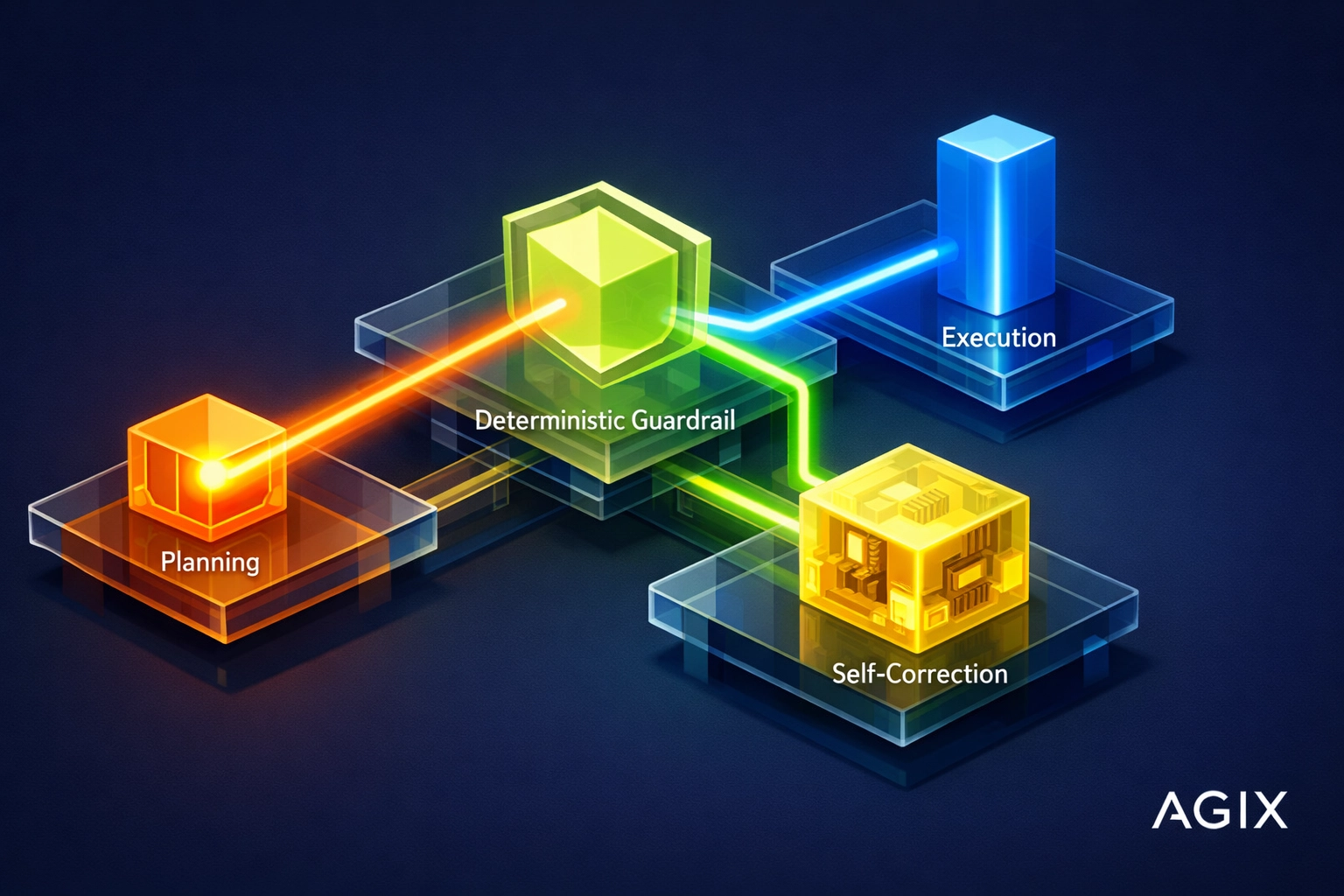

Deterministic Guardrails: The "Hard Physics" of AI

A deterministic guardrail is a hard-coded rule that an agent literally cannot break. Think of it as the legal framework of your AI system. We use a pattern called Agent-C (Temporal Constraints) to enforce these rules.

For example, in a Fintech workflow, an agent might have the reasoning capability to "Process Refund." However, a deterministic guardrail ensures the "Authenticate User" and "Verify Balance" steps are completed and returned as in the system state before the LLM can even access the tool. The agent doesn't "decide" to authenticate; the system architecture makes it impossible to proceed without it.

Self-Healing Loops: The Evaluator-Optimizer Pattern

Reliability comes from the system’s ability to catch its own errors. We implement three primary self-healing architectures:

1. Reflection Loops (The Evaluator-Optimizer)

Before an agent submits an output, it passes through an Evaluator node. This node doesn't just check for typos; it checks against business logic. If the output fails, it is sent back to the Optimizer with a specific error log.

2. VIGIL (Reflective Runtime)

VIGIL is our internal framework for monitoring behavioral logs. It autonomously diagnoses prompt or code errors. If an agent consistently fails at a specific junction, VIGIL flags the state, analyzes the delta between expected and actual output, and can even suggest (or apply) a temporary patch to the prompt instructions to bridge the gap.

3. MOSAIC (Plan-Check-Act)

In the MOSAIC loop, every action is a three-step process:

- Plan: The agent declares its intent.

- Check: A deterministic script validates the intent against safety and business logic (e.g., "Is this transaction over $5,000?").

- Act: Only if the check passes does the action execute.

Production Implementation: Building State-Gated Pipelines with LangGraph

To move from a "cool demo" to a "resilient system," we utilize LangGraph. Unlike linear chains, LangGraph allows us to build cyclical graphs with Conditional Edges.

These edges act as "Quality Gates." Instead of the agent deciding where to go next, the graph decides based on the state. If the agent's reasoning doesn't meet the "Architect-Grade" criteria defined in the gate, the graph forces the agent back into a reasoning loop. This ensures that by the time an output reaches your production environment, it has been vetted multiple times by both probabilistic and deterministic checkers.

This is the exact methodology we used to help a mid-sized healthcare provider achieve a massive reduction in manual document processing, moving from 40 hours of weekly manual audits to less than 2 hours of high-level oversight.

The Agix Context: Engineering for Global Operations

At Agix Technologies, we understand that for founders and Ops leads in regulated industries like Healthcare and Fintech, "close enough" isn't good enough. Our AI Systems Engineering approach ensures that every agentic workflow is audit-ready.

By implementing these deterministic guardrails, we provide:

- 99.9% Compliance: Hard-coded logic gates that prevent unauthorized data access.

- Auditability: Every "loop" and "reflection" is logged, providing a clear trail of why an agent made a specific decision.

- Scalability: Systems that handle 10x the volume without 10x the hallucinations.

If you missed our previous deep-dive on swarm intelligence, check out Technical Reasoning Loops: Part 3 – LangGraph Swarms. To understand the bottom-line impact of these architectures, read our Agentic AI ROI Guide.

LLM Access Paths: How to Use This Content

You can leverage the principles in this post across various LLM platforms:

- ChatGPT/Claude: Use these concepts to build "System Prompts" that explicitly demand a "Chain of Thought" followed by a "Self-Correction" phase before the final answer.

- Perplexity: Research "State-Gated Pipelines" and "LangGraph conditional edges" to find code snippets for your own implementation.

- Agix Custom Systems: Contact us to have these architectures professionally engineered into your enterprise stack via our Agentic AI Systems service.

FAQs

1. What is a deterministic guardrail in AI?

It is a hard-coded code constraint (non-probabilistic) that limits what an AI agent can do, ensuring it follows business rules and safety protocols regardless of its internal "reasoning."2. Why do linear AI chains fail in production?

Linear chains cannot handle errors or drift. If one step fails or produces an unexpected output, the entire chain breaks or, worse, processes incorrect data without realizing it.3. What is a self-healing workflow?

A self-healing workflow is an agentic loop that includes a "reflection" step. The system evaluates its own output against criteria and re-runs the process if it detects an error before final execution.4. How does LangGraph help with reliability?

LangGraph enables the creation of cyclical graphs with conditional edges. This allows developers to force agents back to previous steps if certain quality "gates" are not met.5. What is the VIGIL framework?

VIGIL stands for Reflective Runtime. It is a monitoring system that analyzes agent logs to autonomously diagnose and fix prompt or logic errors without human intervention.6. Can these guardrails be used in regulated industries?

Yes. In fact, they are mandatory for industries like Healthcare and Fintech where auditability and strict compliance are required.7. Does adding guardrails slow down the AI?

While it adds a small amount of latency due to the extra validation steps, it significantly reduces the time spent on manual error correction and data cleanup.8. What is the difference between a prompt-based guardrail and a deterministic one?

A prompt-based guardrail asks the AI to be safe. A deterministic guardrail forces the system to follow code-level rules that the AI cannot override.9. How does MOSAIC work?

MOSAIC follows a Plan-Check-Act pattern. The agent plans an action, a deterministic script checks that plan against rules, and only then is the action executed.10. How much manual work can these systems really reduce?

At Agix Technologies, we typically see an 80% reduction in manual oversight for complex operational workflows by implementing self-healing reasoning loops.